Conventional Chunk

- very long

- spanning multiple sections

- isolating heading from subsequent content

POMA PrimeCut is a document ingestion and chunking engine that preserves hierarchical document structure, eliminates context poisoning, and produces clean, semantically coherent chunks at unmatched accuracy per token.

Engineers optimizing RAG systems spend most of their time tuning retrieval: adjusting similarity thresholds, re-ranking results, switching embedding models. These interventions treat symptoms. The underlying pathology is how documents are parsed and chunked upstream — a step most pipelines delegate to naive text splitters or PDF-to-text extractors that have no awareness of document semantics.

The result is a vector store filled with structurally corrupted, semantically diluted embeddings. No retrieval strategy fully recovers from that.

Chunks containing content from multiple unrelated sections produce embeddings that represent neither topic accurately.

Document hierarchy — headings, tables, section boundaries — is discarded before chunking begins.

Fixed-size chunking severs semantic units at arbitrary character or token limits.

These are not edge cases. They are the default behavior of general-purpose text processing pipelines applied to structured documents. Fixing retrieval without fixing ingestion is rearranging deck chairs. PrimeCut addresses all three failure modes at the source. Learn more about chunking failure modes →

Every document carries an internal logic: a hierarchy of headings, sub-sections, tables, lists, and supporting elements that define what content belongs together and why. That structure is not decoration — it is the semantic map of the document.

Standard ingestion pipelines discard this map. They extract raw text and hand it to a chunker that has no knowledge of where one idea ends and another begins.

PrimeCut understands your document’s content hierarchy before chunking — preserving structural relationships, eliminating context poisoning, and producing semantically coherent chunksets that make every downstream RAG component more accurate by default.

Cybersecurity Guidance for Medical Devices: Quality Systems and Premarket Submission

Requirements

[…]

Guidance for Industry and Food and Drug Administration Staff

[…]

Contains Nonbinding Recommendations outline

[…]

B. Designing for Security

When reviewing premarket submissions, FDA intends to assess device cybersecurity

based on a number of factors, including, but not limited to, the device's ability

to provide and implement the security objectives below throughout the device

architecture.

[…]

The extent to which security requirements, architecture, supply chain, and

implementation are needed to meet these objectives will depend on but may not

be limited to:

[…]

• Its intended and actual environment of use:

[…]

• The risk of patient harm due to vulnerability exploitation.Most RAG benchmarks measure whether the right chunks were retrieved — not whether the resulting context is actually useful to the LLM. Token waste, attention gaps, and context poisoning go unmeasured.

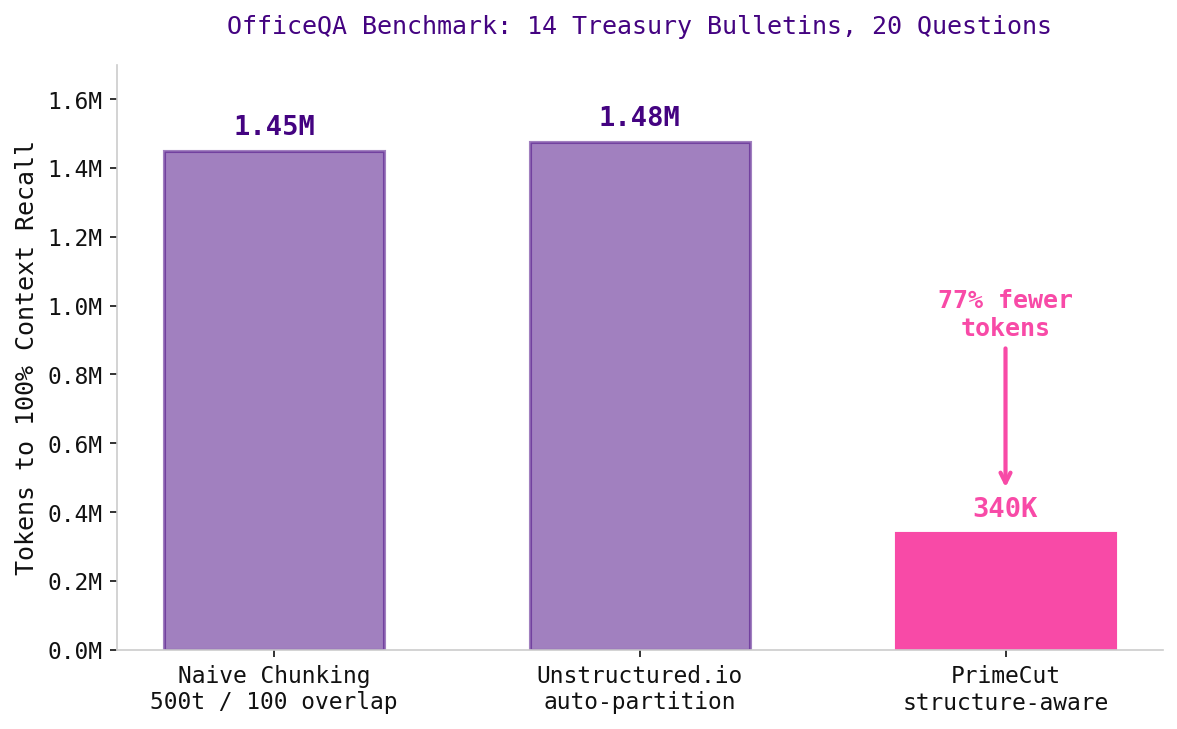

POMA-OfficeQA asked a different question: How many tokens do I need, to achieve 100% context recall?

PrimeCut ships in two tiers. Both preserve document hierarchy. Both eliminate context poisoning. The difference is in how they handle visual content and compute — matched to the complexity of your documents and your budget.

Simple hierarchical chunking for well-structured documents.

Full structural and visual intelligence for complex, mixed-content documents.

PrimeCut sits at the ingestion layer of your RAG pipeline — upstream of your vector database, your embedding model, and your retrieval logic. It receives documents. It returns structured, hierarchically-bounded chunksets.

The SDK is lightweight. The API is flexible. And because PrimeCut's output schema is consistent across both configurations.

Compatible with:The SDK is lightweight. The API is flexible. And PrimeCut's output schema is consistent across both configurations.